Paralegal Guide: Detecting AI in Legal Documents

If you are a paralegal, you probably already know this: AI-assisted drafting is now common in contracts, briefs, and discovery.

Even though AI-assisted drafting is now common, undocumented AI usage can introduce hallucinations, compliance issues, and evidentiary challenges that make things more difficult for your clients.

To maintain the integrity and standing of your law firm, you can use AI detection. Not as an engine for generating accusations against those you work with, but as a verification and risk-mitigation tool. AI-generated documents and sections of documents should be reviewed by knowledgeable paralegals.

Detecting AI in Legal Documents

AI detection for legal documents involves analyzing writing patterns to estimate whether the text was likely generated by large language models.

For example, if you use an AI detection tool to scan a brief, the tool will look at the writing patterns within the brief and identify any sections that were generated by AI.

AI legal document review differs from plagiarism review because it isn’t looking to see if this text has appeared elsewhere and is not credited, rather AI detection verifies if and to what extent AI has been used to generate the text.

Detecting AI in legal text is a challenge given its formal tone and technical specificity, both of which are often indicators of AI generated text. Many general purpose AI detection tools struggle to distinguish legal from AI generated text for this reason. When evaluating various tools for you and your firm, it’s important to keep this in mind.

When analyzing legal text, Pangram evaluates the text’s structure, predictability, and specific statistical signals.

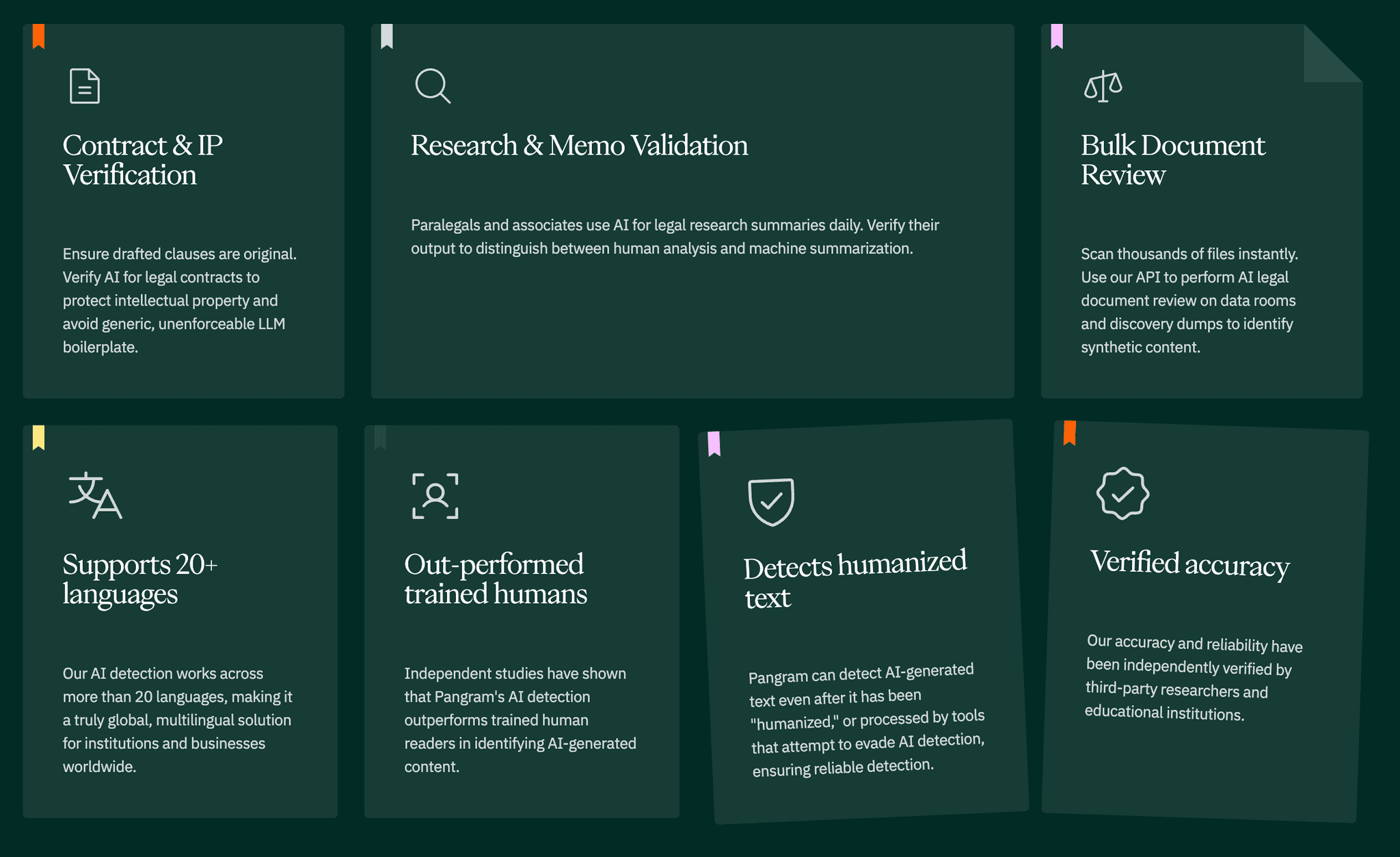

Pangram - Legal Document AI Detection Features

Can AI Detection Be Trusted for Legal Work?

Yes, AI detection is accurate for legal text. It is important to keep in mind detection should be used as a probabilistic signal and not as definitive proof.

Even though some AI detectors are very reliable, a low false-positive rate does not mean a zero no false positive rate; they do happen. If you use an AI detector to check legal documents, and it indicates that a document was generated with AI, it is important that you review the text yourself before drawing a final conclusion.

Why AI detection matters for law: courts and compliance teams view probabilistic evidence of AI generation with caution. A piece of legal writing that was produced with AI could contain serious errors, inaccuracies, or erroneous claims.

Common Legal Documents Where AI Use Creates Risk

The following common legal documents come with major risks when AI is used to generate them:

- Contracts & amendments: Hallucinations. AI often hallucinates (creates) cases that do not exist. If your contract or amendment cites laws/regulations that don’t exist, the validity of the document is at risk nad you and your firm are at risk of sanctions.

- Discovery responses: discovery responses should include requested and relevant information.Because AI often creates information or tends towards verbosity, AI generated text in a discovery document is at high risk of including factually incorrect but legitimate sounding information. Polluted discovery documents could harm your client and be a major headache.

- Internal memos and summaries: memos and summaries generated with AI could contain AI hallucinations, such as laws that don’t exist and events that didn’t happen, which can mislead attorneys and waste their time.

- Client-submitted drafts: More and more people use generative AI to write. That means, the possibility your client could submit AI-generated documents is high. From discovery docs and questionnaires, to contracts it’s critical to know where AI is so you can best protect your client.

AI Detection vs. Manual Review: Use Both!

You need AI detection in order to create a program to highlight sections of documents that should be manually reviewed. The reason for this is as follows: AI detection can catch statistical irregularities that suggest AI usage, and manual review can catch content that is incorrect or harmful.

Combining AI detection with manual review reduces downstream liability by allowing you to ensure that a piece of content wasn’t written with AI and, if it was, to remove the legal flaws that might end up hurting your client.

How Paralegals Should Handle a Document Flagged as AI

Paralegals should handle a document flagged as AI using a step-by-step workflow that supports the paralegals they work with, the lawyers that run the firm, and the clients they serve. This step-by-step workflow is as follows:

- Review your internal "acceptable AI-use" policy if you have one.

- If a document was flagged as AI, after AI legal document review, verify this usage by running it through the AI detector one more time and reading it yourself.

- Check the document history and metadata - for example, if you see that the document was empty before a large volume of text was pasted, that’s a good sign the document was produced with AI.

- Use the document history, metadata, and/or AI detector results as evidence and put this evidence together to escalate your claim that a particular document was AI-generated.

- Document all of the evidence you gather and clarify how you got this evidence, what this evidence suggests, and what it means, so that you have an audit trail.

Choosing the Right AI Detection Tool for Legal Teams

“Human in the loop” is absolutely necessary. AI detection helps you and your team know exactly where to look and what to review faster and more effectively.

Combining AI detection with manual review reduces downstream liability by giving you visibility and confidence into each document that you and your team produce.

How Attorneys Should Handle a Document Flagged as AI

If a document is flagged as a containing AI generated content use this step-by-step guide:

- Review your firm or company internal "acceptable AI-use" policy.

- If a document was flagged as AI, rescan the document to confirm consistent results.

- Check the document history and metadata - for example, if you see that the document was empty before a large volume of text was pasted, that’s a good sign the document was produced with AI.

- Use the document history, metadata, and/or AI detector results as evidence and put this evidence together to escalate your claim that a particular document was AI-generated.

- Document all of the evidence you gather and clarify how you got this evidence, what this evidence suggests, and what it means, so that you have an audit trail.

Choosing the Right AI Detection Tool for Legal Teams

Choosing the right AI detection tool for legal teams depends on your workflow, your firm’s AI policy, the law you practice and the clients you serve. Some key considerations:

- The AI detector can verify if a piece of writing was AI-generated across multiple legal writing styles.

- The AI detector has false positive controls to prevent human-written documents from being flagged as AI.

- The AI detector comes with privacy and non-training guarantees to protect your firm and clients.

- The AI detector offers enterprise-level auditability, allowing your entire enterprise to use it.

- The AI detector should be able to quantify the extent of AI usage within a particular document..

Among the tools on the market, Pangram is the only tool robust enough to consistently distinguish legal text and AI-generated text, integrates into existing legal workflows, supports the highest level of enterprise data and privacy security controls and has a consistently low false positive rate.

Detection tools allow attorneys to preserve document integrity and protect their firm, company and clients.

Help your legal team by using Pangram.

to our updates